Living Computers

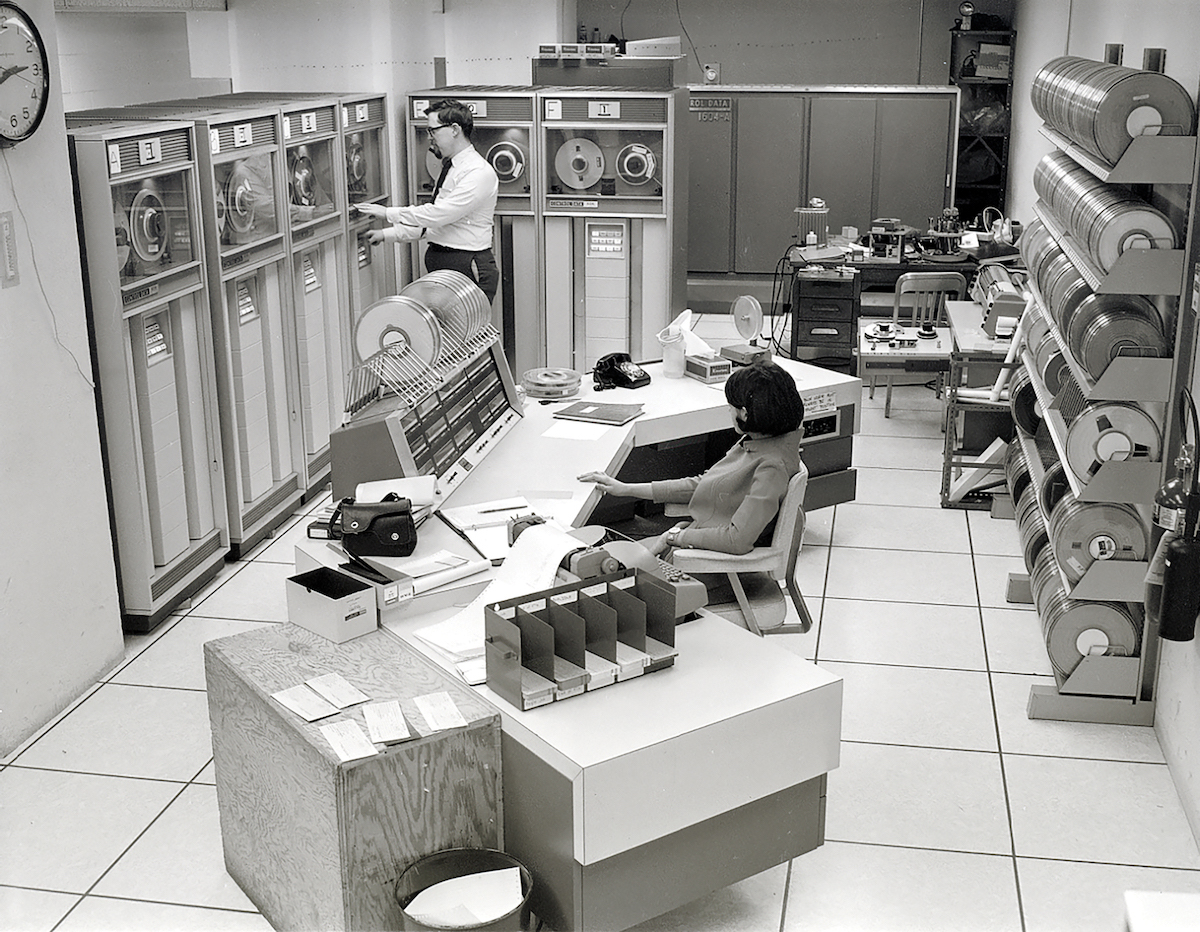

In 1967, the idea of computer science as a distinct discipline seemed outlandish enough that three leaders of the movement felt the need to write a letter to Science addressing the question, “What is computer science?” In their conclusion, Allen Newell, Alan J. Perlis, and Herbert A. Simon firmly asserted that computer science was a discipline like botany, astronomy, chemistry, or physics—a study of something dynamic, not static: “Computer scientists will study living computers with the same passion that others have studied plants, stars, glaciers, dyestuffs, and magnetism; and with the same confidence that intelligent, persistent curiosity will yield interesting and perhaps useful knowledge.”

Speed forward six decades, and the application of “intelligent, persistent curiosity” has succeeded in routing nearly all aspects of daily life through computing machines. Objects, such as cars, that once seemed reassuringly analog now re-render themselves on a regular basis; in the past month alone, 2 million electric cars were recalled for updates to their self-driving software. Meanwhile, generative artificial intelligence powers hundreds of internet-based news sites, fueling concerns about misinformation and disinformation—not to mention fear for the profession of journalism. And digital communication has become a front in modern armed conflict: the Russian military has been accused of hacking Ukraine’s cell and internet service, which shut off streetlights and missile warning systems. Shifting meanings of “truth” and “news”—let alone “war”—is not strictly within the scope of computer science, but none of these concepts would be intelligible without it.

Given the eventual success of computer science as a discipline, the defensive tone of Newell, Perlis, and Simon’s letter is surprising. Just two years before, in 1965, the three had founded one of the country’s first computer science departments at Carnegie Mellon University. And they had little patience for doubters. “There are computers. Ergo, computer science is the study of computers…. It remains only to answer the objections posed by many skeptics,” the authors quipped in their letter. They efficiently dismissed six objections, as handily as you’d expect: Newell and Simon were members of the National Academy of Sciences, and Perlis was a member of the National Academy of Engineering. Newell and Perlis won the Turing Award and Simon the Nobel Prize.

In defining the discipline of computer science, the three had what seems to be a premonition of today’s hybrid reality in which computers have spilled across boundaries to mediate the world. “‘Computers’ means ‘living computers’—the hardware, their programs or algorithms, and all that goes with them. Computer science is the study of the phenomena surrounding computers.”

With so many aspects of life now fitting under the phenomena of “living” computation, the initial logic behind the creation of the discipline of computer science is getting turned on its head. The totalizing power of computational machines means that making sense of the present requires insights from disciplines that once seemed hopelessly removed from technology—like philosophy, history, and sociology.

In this issue, philosopher C. Thi Nguyen writes about what his field has revealed about the inherent subjectivity and potential weaknesses of data. “When a person is talking to us, it’s obvious that there’s a personality involved,” he writes. “But data is often presented as if it arose from some kind of immaculate conception of pure knowledge,” obscuring the political compromises and judgement calls that make the gathering of data possible. He quotes the historian of science Theodore Porter on this sleight of hand: “Quantification is a way of making decisions without seeming to decide.”

The same could be said for living computers. As algorithms have become embedded in our lives, through social media and now artificial intelligence, it’s increasingly difficult to tell where the decisions are made. In this issue, a collection of historians, sociologists, communications scholars, and an anthropologist share useful insights into generative AI’s effect on society. They explore how the technology is changing cultural narratives, redefining the value of human labor, and outstripping reliable conventions of knowledge, all in the interest of protecting society from AI’s harms.

The totalizing power of computational machines means that making sense of the present requires insights from disciplines that once seemed hopelessly removed from technology—like philosophy, history, and sociology.

By looking at generative AI through the lens of the humanities, these scholars reveal new pathways for equitable governance. “The destabilization around generative AI is also an opportunity for a more radical reassessment of the social, legal, and cultural frameworks underpinning creative production,” write AI researcher Kate Crawford and legal scholar Jason Schulz. “Making a better world will require a deeper philosophical engagement with what it is to create, who has a say in how creations can be used, and who should profit.”

These insights have long underpinned Issues’ work, but they are more urgent now. By 2018, an Academies report, known as Branches From the Same Tree, proposed that humanities education be more tightly coupled with training in science, technology, engineering, and mathematics (STEM), along with medicine. “Given that today’s challenges and opportunities are at once technical and human, addressing them calls for the full range of human knowledge and creativity. Future professionals and citizens need to see when specialized approaches are valuable and when they are limiting, find synergies at the intersections between diverse fields, create and communicate novel solutions, and empathize with the experiences of others.” Already, this somewhat defensive advocacy for the value of the humanities is starting to seem as prescient as the 1967 call for computer science.

The other half of the story of living computers is that over the last 50 years, fostering technological industry has become a policy imperative at federal, state, and local levels. In this magazine’s first issue, in 1984, then governor of Arizona Bruce Babbitt wrote that state governments had discovered scientific research and technological innovation as “the prime force for economic growth and job creation.” Pointing to the University of Texas at Austin’s success with the Balcones Research Center, Babbitt compared the frenzy to turn university research into an economic propellant to the nineteenth century’s Gilded Age, “when communities vied to finance the transcontinental railroads.”

Living in the age of living computers, and profiting from it, requires understanding how societies work, how people get along, and how they make meaning together. Now should be a time of reinvigorated collaboration between STEM and humanities at every level.

Forty years later, the search for the keys to enduring regional growth has become ever more frantic, while income inequality has grown tremendously. Even as economic stagnation and declining global competitiveness contribute to a sense of drag, faith remains in technological innovation as a silver bullet. The White House heralded the 2022 CHIPS and Science Act as positioning US workers, communities, and businesses to “win the race for the twenty-first century.”

But as Grace Wang argues in this issue, the old, simplistic sense of how innovation can catalyze regional economies has been surpassed by a recognition of the complexity of that process. Today’s innovation districts involve dense concentrations of people with “colocation of university research and education facilities, industry partners, startup companies, retail, maker spaces, and even apartments, hotels, and fitness centers.” If the traditional vision of harvesting the fruits of university innovation involved the provision of durable goods like laboratories and supercomputers, today’s research clusters require an entire upscale digital lifestyle: good coffee, good venture capital, good vibes, and good gyms to counteract all that screen time.

The harder trick may be helping those place-based ecosystems to persist. Wang observes that for a regional innovation center to last, it must draw a steady stream of new workers. The entire society around the region must be transformed so that children can imagine themselves as part of this innovation ecosystem from an early age. And even a traditional STEM education is not enough to create the kinds of workers who can thrive in a global competition. “They need to be collaborative team players, creative and critical thinkers, motivated value creators, and effective communicators.”

“Winning” the twenty-first century, whatever that comes to mean, will require soft skills as well as software. In a sense, Wang ends at the same place as our philosophers, sociologists, and the National Academies’ Branches report: living in the age of living computers, and profiting from it, requires understanding how societies work, how people get along, and how they make meaning together. Now should be a time of reinvigorated collaboration between STEM and humanities at every level.

Treating STEM and the humanities as mortal competitors for scarce funding—or worse, as a moral competition between “problem solvers” and “problem wallowers”—is not a wise industrial strategy.

But it is not. In September, West Virginia University announced that it was eliminating 28 majors, shutting down the department of world languages and linguistics, cutting faculty in law, communications studies, public administration, education, and public health. It is just one among many state universities that have cut non-STEM classes over the past few years: Missouri, Kentucky, New York, Kansas, Ohio, Maine, Vermont, Alaska, and North Dakota.

Rural states, in particular those that have lost jobs, increasingly see STEM as their lifeline. But in places with few options, eliminating humanities risks creating environments that fall further behind on providing the soft services and the critical thinkers necessary for industrial competitiveness. STEM degrees may initially make students a better fit for employers, but who wants to hang around and engineer innovations in a place without coffee shops and art, music, and theater? Social transformation is an inherently cultural activity. Treating STEM and the humanities as mortal competitors for scarce funding—or worse, as a moral competition between “problem solvers” and “problem wallowers”—is not a wise industrial strategy.

Newell, Perlis, and Simon’s vision of “living computers” has come to pass, but paradoxically that has only increased the necessity of other disciplines to understand and remake the world.