RIP: The Basic/Applied Research Dichotomy

Terminology that does not reflect the rich connectivity and interaction of many types of research is a barrier to developing policies built on the realities of science and technology.

U.S. science policy since World War II has in large measure been driven by Vannevar Bush’s famous paper Science—The Endless Frontier. Bush’s separation of research into “basic” and “applied” domains has been enshrined in much of U.S. science and technology policy over the past seven decades, and this false dichotomy has become a barrier to the development of a coherent national innovation policy. Much of the debate centers on the appropriate federal role in innovation. Bush argued successfully that funding basic research was a necessary role for government, with the implication that applied research should be left to the auspices of markets. However, the original distinction does not reflect what actually happens in research, and its narrow focus on the stated goals of an individual research project prevents us from taking a more productive holistic view of the research enterprise.

By examining the evolution of the famous linear model of innovation, which holds that scientific research precedes technological innovation, and the problematic description of engineering as “applied science,” we seek to challenge the existing dichotomies between basic and applied research and between science and engineering. To illustrate our alternative view of the research enterprise, we will follow the path of knowledge development through a series of Nobel Prizes in Physics over several decades.

This mini-history reveals how knowledge grows through a richly interwoven system of scientific and technological research in which there is no clear hierarchy of importance and no straightforward linear trajectory. Accepting this reality has profound implications for the design of research institutions, the allocation of resources, and the national policies that guide research. This in turn can open the door to game-changing discoveries and inventions and put the nation on the path to a more sustainable science and technology ecosystem.

History of an idea

Although some observers cite Vannevar Bush as the source of the linear model of innovation, the concept actually has deep roots in long-held cultural assumptions that give priority to the work of the head over the work of the hand and thus to the creation of scientific knowledge over technical expertise. If one puts this assumption aside, it opens up a new way of understanding the entire innovation process. We will focus our attention on how it affects our understanding of research.

The question of whether understanding always precedes invention has long been a troubling one. For example, it is widely accepted that many technologies reached relatively advanced stages of development before detailed scientific explanations about how the technologies worked emerged. In one of the most famous examples, James Watt invented his steam engine before the laws of thermodynamics were postulated. In fact, the science of thermodynamics owes a great deal to the steam engine. This and other examples should make it clear that assumptions about what has been called basic and applied research do not accurately describe what actually happens in research.

In 1997, Donald Stokes’s book Pasteur’s Quadrant: Basic Science and Technological Innovation was published posthumously. In this work, Stokes argued that scientific efforts were best carried out in what he termed “Pasteur’s Quadrant,” where researchers are motivated simultaneously by expanding understanding and increasing our abilities (technological, including medicine) to improve the world. Stokes’s primary contribution was in expanding the linear model into a two-dimensional plane that sought to integrate the idea of the unsullied quest for knowledge with the desire to solve a practical problem.

Stokes’s model comprises three quadrants, each exemplified by a historical figure in science and technology. The pure basic research quadrant exemplified by Niels Bohr represents the traditional view of scientific research as being inspired primarily by a desire to extend fundamental understanding. The pure applied research quadrant is exemplified in Edison, who represents the classical inventor, driven to solve a practical problem. Louis Pasteur’s quadrant is a perfect mix of the two, inventor and scientist in one, expanding knowledge in the pursuit of practical problems. Stokes described this final quadrant as “use-inspired basic research.” The fourth quadrant is not fully described in Stokes’ framework.

The publication of Stokes’s book excited many in the science policy and academic communities, who believed it would free us from the blinders of the linear model. A blurb on the back of the book quotes U.S. Congressman George E. Brown Jr.: “Stokes’s analysis will, one hopes, finally lay to rest the unhelpful separation between ‘basic’ and ‘applied’ research that has misinformed science policy for decades.” However, it has become clear that although Stokes’s analysis cleared the ground for future research, it did not go far enough, nor did his work result in sufficient change in how policymakers discuss and structure research. Whereas Stokes notes how “often technology is the inspiration of science rather than the other way around,” his revised dynamic model does not recognize the full complexity of innovation, preferring to keep science and technology in separate worlds that mix only in the shared agora of “use-inspired basic research.” It is also significant that Stokes’s framework preserves the language of the linear model in the continued use of the terms basic and applied as descriptors of research.

We see a need to jettison this conception of research in order to understand the complex interplay among the forces of innovation. We propose a more dynamic model in which radical innovation often arises only through the integration of science and technology.

Invention and discovery

A critical liability of the basic/applied categorization is that it is based on the motivation of the individual researcher at the time of the work. The efficacy and effectiveness of the research endeavor cannot be fully appreciated in the limited time frame captured by a singular attention to the motivations of the researchers in question. Admittedly, motivations are important. Aiming to find a cure for cancer or advance the frontiers of communications can be a powerful incentive, stimulating groundbreaking research. However, motivations are only one aspect of the research process. To more completely capture the full arc of research, it is important to consider a broader time scale than that implied by just considering the initial research motivations. Expanding the focus from research motivations to also include questions of how the research is taken up in the world and how it is connected to other science and technology allows us to escape the basic/applied dichotomy. The future-oriented aspects of research are as important as the initial motivation. Considering the implications of research in the long term requires an emphasis on visionary future technologies, taking into account the well-being of society, and not being content with a porous dichotomy between basic and applied research.

We propose using the terms “invention” and “discovery” to describe the twin channels of research practice. For us, invention is the “accumulation and creation of knowledge that results in a new tool, device, or process that accomplishes a specific purpose.” Discovery is the “creation of new knowledge and facts about the world.” Considering the phases of invention and discovery along with research motivations and institutional settings enables a much more holistic and long-term view of the research process. This allows us to examine the ways in which research generates innovation and leads to further research in a virtuous cycle.

Innovation is a complex, nonlinear process. Still, straightforward and sufficiently realized representations such as Stokes’s Pasteur’s quadrant are useful as analytical aids. We propose the model of the discovery-invention cycle, which will serve to illustrate the interconnectedness of the processes of invention and discovery, and the need for consideration of research effectiveness over longer time frames than is currently the case. Such a model allows for a more reliable consideration of innovation through time. The model could also aid in discerning possible bottlenecks in the functioning of the cycle of innovation, indicating possible avenues for policy intervention.

A family of Nobel Prizes

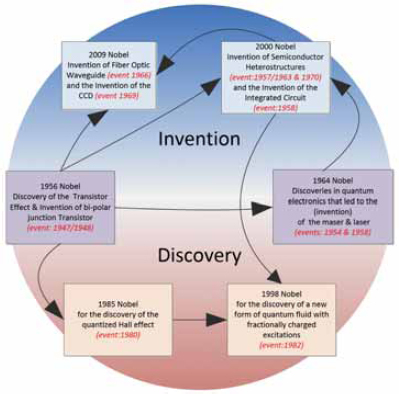

To illustrate this idea, consider Figure 1 below, in which we trace the evolution of the current information and communication age. What can be said about the research that has enabled the recent explosion of information and communication technologies? How does our model enable a deeper understanding of the multiplicity of research directions that have shaped the current information era? To fully answer this question, it is necessary to examine research snapshots over time, paying attention to the development of knowledge and the twin processes of invention and discovery, tracing their interconnections through time. To our mind, the clearest place for selecting snapshots that illustrate the evolution of invention and discovery that enables the information age is the Nobel Prize awards.

We have thus examined the Nobel Prizes in Physics from 1956, 1964, 1985, 1998, 2000, and 2009, which were all related to information technologies. We describe these kinds of clearly intersecting Nobels as a family of prizes in that they are all closely related. Similar families can be found in areas such as nuclear magnetic resonance and imaging.

The birth of the current information age can be traced to the invention of the transistor. This work was recognized with the 1956 Physics Nobel Prize awarded jointly to William Shockley, John Bardeen, and Walter Brattain “for their researches on semiconductors and their discovery of the transistor effect.” Building on early work on the effect of electric fields on metal semiconductor junctions, the interdisciplinary Bell Labs team built a working bipolar-contact transistor and clearly demonstrated (discovered) the transistor effect. This work and successive refinements enabled a class of devices that successfully replaced electromechanical switches, allowing for successive generations of smaller, more efficient, and more intricate circuits. Although the Nobel was awarded for the discovery of the transistor effect, the team of Shockley, Bardeen, and Brattain had to invent the bipolar-contact transistor to demonstrate it. Their work was thus of a dual nature, encompassing both discovery and invention. The discovery of the transistor effect catalyzed a whole body of further research into semiconductor physics, increasing knowledge about this extremely important phenomenon. The invention of the bipolar contact transistor led to a new class of devices that effectively replaced vacuum tubes and catalyzed further research into new kinds of semiconductor devices. The 1956 Nobel is therefore exemplary of a particular kind of knowledge-making that affects both later discoveries and later inventions. We call this kind of research radical innovation. The 1956 prize is situated at the intersection of invention and discovery (see Figure 1), and it is from this prize that we begin to trace the innovation cycle for the prize family that describes critical moments in the information age.

FIGURE 1

The innovation cycle in information and communication technologies (dates of events are in red).

The second prize in this family is the 1964 Nobel Prize, which was awarded jointly to Charles Townes and the other half to both Nicolay Basov and Aleksandr Prokhorov. Most global communications traffic is carried by transcontinental fiber optic networks, which use light as the signal carrier. Townes’s work on the stimulated emission of microwave radiation earned him his half of the Nobel. This experimental work showed that it was possible to build amplifier oscillators with low noise characteristics capable of the spontaneous emission of microwaves with almost perfect amplification. The maser (microwave amplification by the stimulated emission of radiation effect) was observed in his experiments. Later, Basov and Prokhorov, along with Townes, extended the maser effect to consideration of its application in the visible spectrum, and thus the laser was invented. Laser light allows for the transmission of very high-energy pulses of light at very high frequencies and is crucial for modern high-speed communication systems. This Nobel acknowledges critical work that was also simultaneously discovery (the maser effect) and invention (the maser and the laser), both central to the rise of the information and communication age. Thus, the 1964 Nobel is also situated at the intersection of invention and discovery. The work on lasers built directly on previous work by Einstein, but practical and operational masers and lasers were enabled by advancements in electronic amplifiers made possible by the solid-state electronics revolution, which began with the invention of the transistor.

Although scientists and engineers conducted a great deal of foundational work on the science of information technology in the 1960s, the next wave of Nobel recognition for this research did not come until the 1980s. Advancements in the semiconductor industry led to the development of new kinds of devices such as the metal oxide silicon field effect transistor (MOSFET). The two-dimensional nature of the conducting layer of the MOSFET provided a convenient avenue to study electrical conduction in reduced dimensions. Klaus von Klitzing discovered that under certain conditions, voltage across a current-carrying wire increased in uniform steps. Von Klitzing received the 1985 Nobel Prize for what is known as the quantized Hall effect. This work belongs in the discovery category, although it did have important useful applications.

The 2000 Nobel Prize was awarded jointly to Zhores Alferov and Herbert Kroemer for “developing semiconductor heterostructures” and to Jack Kilby for “his part in the invention of the integrated circuit.” Both of these achievements can be classified primarily as inventions, and both built on work done by Shockley et al. This research enabled a new class of semiconductor device that could be used in high-speed circuits and optoelectronics. Alferov and Kroemer showed that creating a double junction with a thin layer of semiconductors would allow for much higher concentrations of holes and electrons, enabling faster switching speeds and allowing for laser operation at practical temperatures. Their invention produced tangible improvements in lasers and light-emitting diodes. It was the work on heterostructures that enabled the modern room-temperature lasers used in fiber optic communication systems. Alferov and Kroemer’s work on heterostructures also led to the discovery of a new form of matter, as discussed below.

Jack Kilby’s work on integrated circuits at Texas Instruments earned him his half of the Nobel for showing that entire circuits could be realized with semiconductor substrates. Shockley, Bardeen, and Brattain had invented semiconductor-based transistors, but these were discrete components and were used in circuits with components made from other materials. The genius of Kilby’s work was in realizing that semiconductors could be arranged in such a way that the entire circuit, not just the transistor, could be realized on a chip. This invention of a process of building entire circuits out of semiconductors allowed for economies of scale, bringing down the cost of circuits. Further research into process technologies allowed escalating progress on the shrinking of these circuits, so that in a few short years, chips containing billions of transistors were possible.

Alferov and Kroemer’s work was also valuable to Horst Stormer and his collaborators, who combined it with advancements in crystal growth techniques to produce two-dimensional electron layers with mobility orders of magnitude greater than in silicon MOSFETs. Stormer and Daniel Tsui then began exploring some observed unusual behavior that occurred in two-dimensional electrical conduction. They discovered a new kind of particle that appeared to have only one-third the charge of the previously thought-indivisible electron. Robert Laughlin then showed through calculations that what they had observed was a new form of quantum liquid where interactions between billions of electrons in the quantum liquid led to swirls in the liquid behaving like particles with a fractional electron charge. This phenomenon is clearly a new discovery, but it was enabled by previous inventions and resulted in important practical applications such as the high-frequency transistors used in cell phones. For their work, Laughlin, Stormer, and Tsui were awarded the 1998 Nobel Prize in Physics, an achieve ment situated firmly in the discovery category.

The 2009 Nobel was awarded to Charles Kao for “groundbreaking achievements concerning the transmission of light in fibers for optical communication” and to Willard Boyle and George Smith for “the invention of the imaging semiconductor circuit—the CCD.” Both of these achievements were directly influenced by previous inventions and discoveries in this area. Kao was primarily concerned building a workable waveguide for light for use in communications systems. His inquiries led to astonishing process improvements in glass production, as he predicted that glass fibers of a certain purity would allow long-distance laser light communication. Of course, the work on heterostructures that allowed for room-temperature lasers was critical to assembling the technologies of fiber communication. Kao, however, not only created new processes for measuring the purity of glass but also actively encouraged various manufacturers to improve their processes in this respect. Working directly in industry, Kao’s work built on the work by Alferov and Kromer, enabling the physical infrastructure of the information age. Boyle and Smith continued the tradition of Bell Labs inquiry. Adding a brilliant twist to the work that Shockley et al. had done on the transistor, they designed and invented the charge-coupled device (CCD), a semiconductor circuit that enabled digital imagery and video. Kao’s work was clearly aimed at discovering the ideal conditions for the propagation of light in fibers of glass, but he also went further in shepherding the invention and development of the new fiber optic devices.

These six Nobel Prizes highlight the multiple kinds of knowledge that play into the innovations that have enabled the current information and communications age. From the discovery of the transistor effect, which relied on the invention of the bipolar junction transistor and led to all the marvelous processors and chips in everything from computers to cars, to the invention of the integrated circuit, which made the power of modern computers possible while shrinking their cost and increasing accessibility. The invention of fiber optics built on previous work on heterostructures and made the physical infrastructure and speed of the global communications networks possible. In fact, the desire to improve the electrical conductivity of heterostructures led to the unexpected discovery of fractional quantization in two-dimensional systems and a new form of quantum fluid. Each of these could probably be classified as “basic” or “applied” research, but that classification obscures the complexity and multiple nature of the research described above and does not help remove the prejudices of many against what is now labeled as “applied research.” Thinking in terms of invention and discovery through time helps reconstruct the many pathways that research travels along in the creation of radical innovations.

In our model, the discovery-invention cycle can be traversed in both directions, and research knowledge is seen as an integrated whole that mutates over time (as it traverses the cycle). The bidirectionality of the cycle reflects the reality that inventions are not always the product of discovery but can also be the product of other inventions. Simultaneously, important discoveries can arise from new inventions. Observing the cycle of research over time is essential to understanding how progress occurs.

Seeing with fresh eyes

The switch from a basic/applied nomenclature to discovery-invention is not a mere semantic refinement. It enables us to see the entire research enterprise in a new way.

First, it eliminates the tendency to see research proceeding on two fundamentally different and separate tracks. All types of research interact in complex and often surprising ways. To capitalize on these opportunities, we must be willing to see research holistically. Also, by introducing new language, we hope to escape the cognitive trap of thinking about research solely in terms of the researcher’s initial motivations. All results must be understood in their larger context.

Second, adopting a long time frame is essential to attaining a full understanding of the path of research. The network of interactions traced in the Nobel Prizes discussed above becomes clear only when one takes into account a 50-year history. This extended view is important to understanding the development of both novel science and novel technologies.

Third, the discovery-invention cycle could be useful in identifying problematic bottlenecks in research. Once we recognize the complex interrelationship of discovery and invention, we are more likely to see that problems can occur in many parts of the cycle and that we need to heed the interactions among a variety of institutions and types of research.

Bringing together the notions of research time horizons and bottlenecks, we argue that successful radical innovation arises from knowledge traveling the innovation cycle. If, as argued above, all parts of the innovation process must be adequately encouraged for the cycle to function effectively, then the notion of traveling also emphasizes that we should have deep and sustained communication between scientists and engineers, between theorists and practitioners. Rather than separating researchers according to their motivation, we must strive to bring all forms of research into deeper congress.

This fresh view of the research enterprise can lead us to rethinking the design of research institutions to align with the principles of long time frames, a premium on futuristic ideas, and the encouragement of interaction among different elements of the research ecosystem. This is especially pertinent in the case of the mission-oriented agencies such as the Department of Energy and the National Institutes of Health.

Implications for research policy

The pertinent question is how these insights play out in the messy world of policymaking. First, there is an obvious need to complicate the simple and unhelpful distinction between basic and applied research. The notion of the innovation cycle is a very useful aid in thinking about research holistically. It draws attention to the entirety of research practice and allows one to pose the question of public utility to an entire range of activities.

Second, the nature of the public good, and thus the appropriate role for the federal government, changes. The simple and clear notions of basic and applied were useful in one way: They provided a clear litmus test for limits to federal involvement in the research process. The idea that government funding is necessary to pursue research opportunities that aren’t able to attract private funding is a useful one that has contributed to the long-term well-being and productivity of the nation. But through the lens of the discovery-invention cycle, we can see that it would deny federal funding to some types of research that are essential to long-term progress. We suggest that federal support is most appropriate for research that focuses on long-term projects with clear public utility. The difference here is that such research could have its near-term focus on either new knowledge or new technology.

The public good must be understood over the long term, and the best way to ensure that the research enterprise contributes as much as possible to meeting our national goals is to make funding decisions about discovery and invention research in a long-term holistic context.